How Do I Protect My Website Against Bots?

Protecting your website against malicious bots involves a multi-layered approach: implementing a Web Application Firewall (WAF) like Cloudflare or Sucuri, setting rate limits to throttle excessive requests, using CAPTCHAs on forms, and monitoring traffic for anomalies. These tools distinguish human behavior from automated scripts, blocking spam, scrapers, and credential stuffing. Key Bot Protection Techniques:

- Web Application Firewalls (WAF): Use a WAF (e.g., Cloudflare, Imperva) to filter malicious traffic, block known bad IP addresses, and inspect HTTP/HTTPS requests before they reach your server.

- Rate Limiting & Throttling: Limit the number of requests a single user or IP can make in a given timeframe to prevent content scraping and brute-force login attempts.

- CAPTCHA and Challenges: Implement CAPTCHA (e.g., reCAPTCHA, Friendly Captcha) on login, comment, and contact forms to distinguish humans from bots.

- Behavioral Analysis & Machine Learning: Use advanced bot management solutions that analyze mouse movements, navigation, and scroll patterns to identify non-human traffic.

- Honeypots: Create hidden fields or links in your HTML that are invisible to humans but visible to bots. When a bot interacts with these "traps," they can be identified and blocked.

- Review

robots.txt: Userobots.txtto guide good bots (like search engines) away from sensitive areas, though malicious bots often ignore this file. - Block Known Bad User Agents & Hosting Providers: Block requests from outdated browsers and known data center proxy networks used for launching attacks.

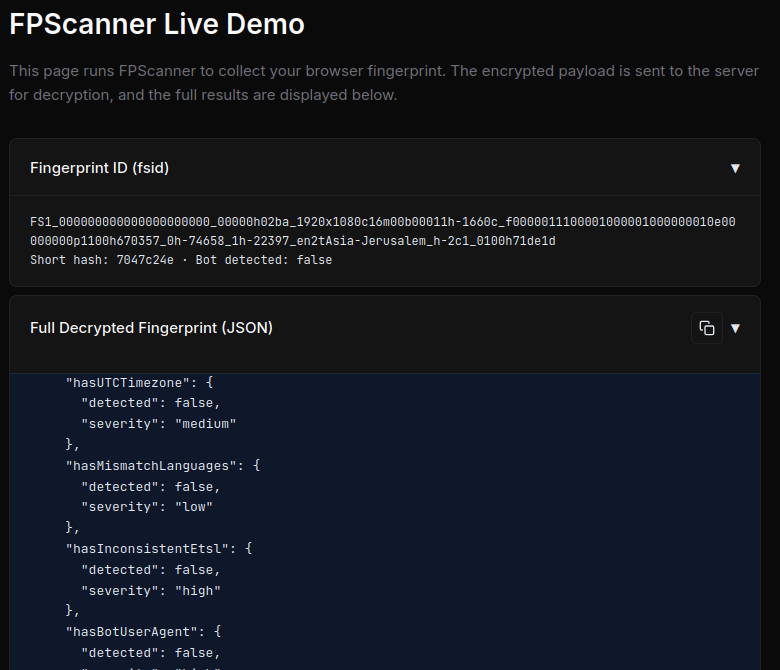

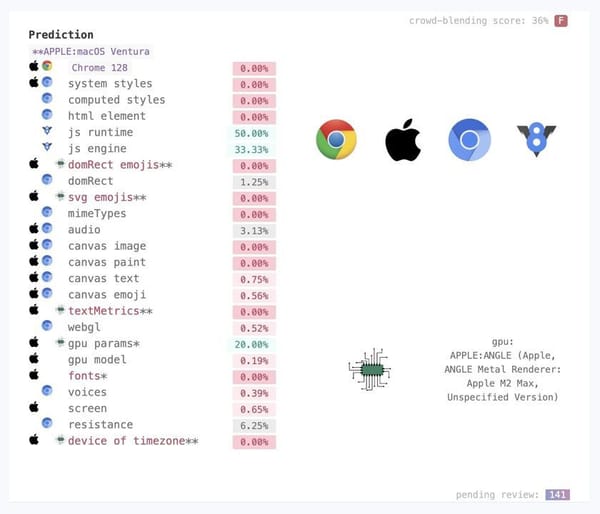

- Device Fingerprinting: Identify bots by detecting patterns in browser characteristics, such as screen resolution, installed fonts, and plugins.

- Monitor Analytics: Watch for sudden traffic spikes, abnormally high bounce rates, and high numbers of failed login attempts, which often indicate bot activity.

Though, you could always rely on https://button.solutions 😄